The Test Suite and Results

In the sections below, we’ll walk you through what we tested, and the results for each. These tests are designed to arm you with the information so you can make the best decision for your type of use.

For each set of results, you can see the analysis for each model of computer for XP, and for Windows 7. If you want to see more detail for single vs. multiple processors, or on an individual Mac model, you may want to review the spreadsheet for those details.

As you look through the charts below, take note on whether faster is represented by longer or shorter bars (see the lower left corner of each chart).

For the launch tests (launching the VM, Windows, and Applications), we had the option of an "Adam" test, and a "Successive" test. Adam tests are when the computer has been completely restarted (hence avoiding both host and guest OS caching). Successive tests are repeated tests without restarting the machine in between tests, and can benefit from caching. Both mimic real use situations.

The tests used were selected specifically to give a real-world view of what VMware Fusion and Parallels Desktop are like to run for many users. We eliminated those tests that we ran which were so short in time frame (e.g., fast) that we could not create statistically significant results, or that had unperceivable differences.

For some of the analysis, we "normalized" results by dividing the result by the fastest result for that test across all the machine configurations. We did this specifically so that we could make comparisons across different groups, and to be able to give you overview results combining a series of types of tests, and computer models.

Instead of a plain "average" or "mean", overall conclusions are done using a "geomean", which is a specific type of average that focuses on the central results and minimizes outliers. Geomean is the same averaging methodology used by SPEC tests, PCMark, Unixbench, and others, and it helps prevent against minor result skewing. (If you are interested in how it differs from a mean, instead of adding the set of numbers and then dividing the sum by the count of numbers in the set, n, the numbers are multiplied and then the nth root of the resulting product is taken).

For those interested in the benchmarking methodologies, see the more detailed testing information in Appendix A. For the detailed results of the tests used for the analysis, see Appendix B. Both appendices are available on the MacTech web site.

Launch and CPU Tests

There are three situations in which users commonly launch a virtual machine:

- Launch the virtual machine from "off" mode, including a full Windows boot.

- Launch the virtual machine from a suspended state, and resume from suspend (Adam).

- Launch the virtual machine from a suspended state, and resume from suspend (Successive).

For the first test, we started at the Finder and launched the virtualization application, which were set up to immediately launch the virtual machine. The visual feedback is fairly different between Parallels Desktop and VMware Fusion when Windows first starts up. Windows actually does its startup for quite some time after reaching the desktop. In some cases, it can take quite some time for Windows to complete its boot process. Most users don’t care if things continue, so long as they aren’t held up.

As a result, we focused on timing to the point of actually accomplishing something. In this case, we hovered over the Task Bar icons and launched the Windows Security Center window (XP), or the networking interface icon (Windows 7). The test ended when the window started to render. This gave us a real world scenario of being able to actually do something as opposed to Windows just looking like it was booted.

The primary difference between the last two types of VM launch test is that the computer is fully rebooted (both the virtual machine as well as Mac OS X) in between the "Adam" tests. The successive tests are launching the virtual machines and restoring them without restarting the Mac in between.

Successive tests benefit from both Mac OS X and possibly virtual machine caching, and are significantly faster. However, you may only see these types of situations if you are constantly switching in and out of your virtual machine.

As with all of our tests, we perform these tests multiple times to handle the variability that can occur. Of these, we took the best results for each product.

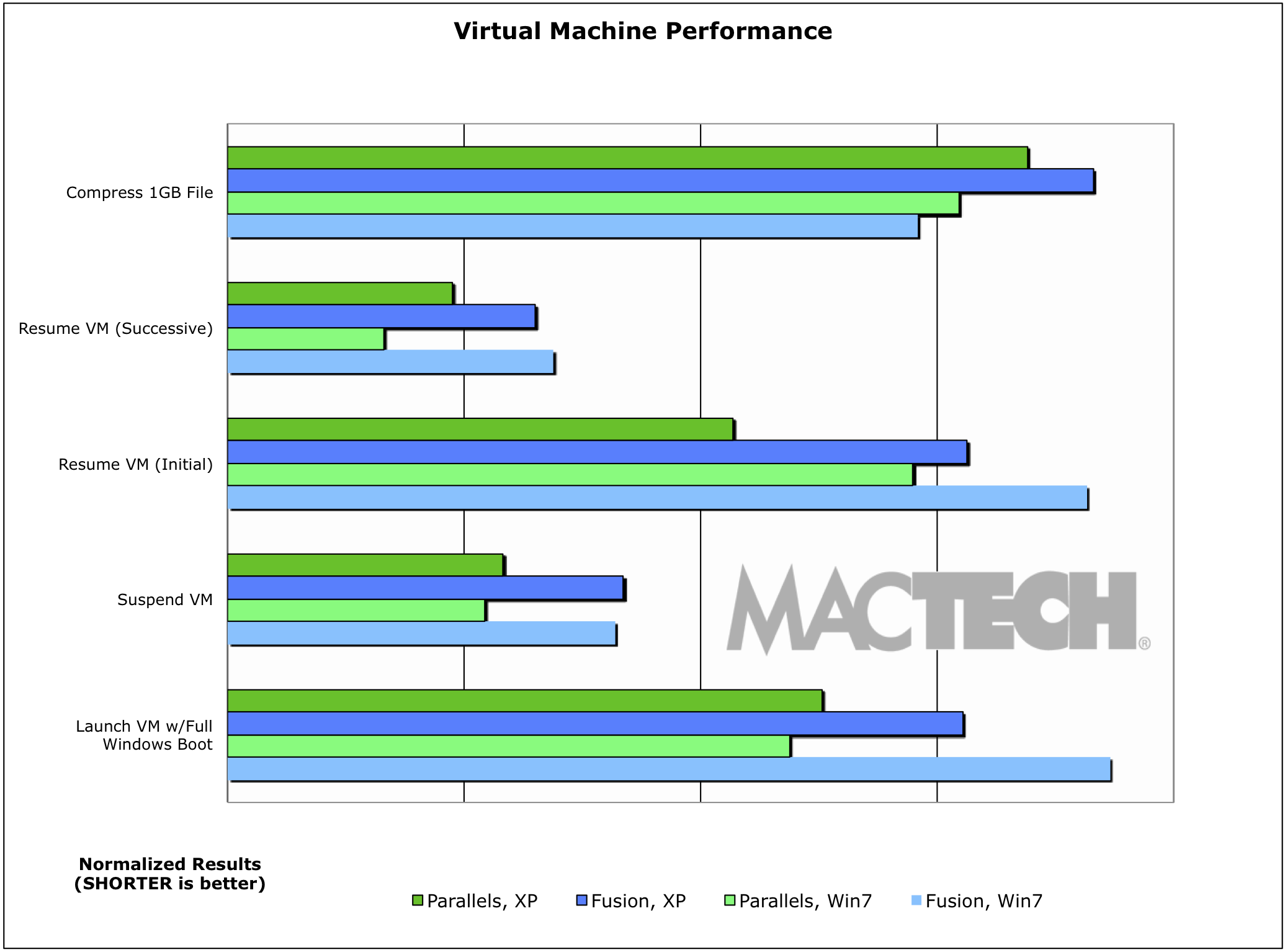

Virtual Machine Performance

Clearly, machines with more memory take longer to restore and that accounts for some of the differences (remember, we selected RAM configurations based on VMware Fusion’s default which varied by OS and Mac model). One thing to remember as a virtualization user is that if you are switching in and out of a VM often, you may want to think about using less RAM, not more. In fact, you should just use as little as you need for the best experience under either virtualized environment. (We suggest 512MB to 1GB for most people. 512MB to 768MB on 2GB MacBooks).

Most benchmarking suites measure CPU performance using file compression as at least one part of their analysis. We did the same. As a matter of interest, we used compression instead of decompression, because with today’s fast computers, decompression is actually much closer to a file copy than it is to CPU work. Compression requires the system to perform analysis to do the compression, and is therefore a better measurement of CPU.

VMware Fusion performed better in the compression test under Windows 7, and Parallels Desktop did better in the same test under Windows XP. For tests related to Windows boot time, suspend and resume, Parallels Desktop was noticeably quicker than VMware Fusion.

| Packed schedule. Learn from the best. Meet new people. Network with peers. One day semimar for techs and consultants who support home users, small office, and small to medium size business. Invest in yourself. Click here for more info. |

|