An Apple patent (number 20110074931) for systems and methods for an imaging system using multiple image sensors has appeared at the US Patent & Trademark Office. It shows that Apple is considering future iPhones and iPads that can take 3D photos.

Per the patent Systems and methods may employ separate image sensors for collecting different types of data. In one embodiment, separate luma, chroma and 3-D image sensors may be used. The systems and methods may involve generating an alignment transform for the image sensors, and using the 3-D data from the 3-D image sensor to process disparity compensation. The systems and methods may involve image sensing, capture, processing, rendering and/or generating images.

For example, one embodiment may provide an imaging system, including: a first image sensor configured to obtain luminance data of a scene; a second image sensor configured to obtain chrominance data of the scene; a third image sensor configured to obtain three-dimensional data of the scene; and an image processor configured to receive the luminance, chrominance and three-dimensional data and to generate a composite image corresponding to the scene from that data. The inventors of the patent are Brett Bilbrey and Guy Cote.

Here’s Apple’s background and summary of the invention: “The use of separate luma and chroma image sensors, such as cameras, to capture a high quality image is known. In particular, the use of separate luma and chroma image sensors can produce a combined higher quality image when compared to what can be achieved by a single image sensor.

“Implementing an imaging system using separate luma and chroma image sensors involves dealing with alignment and disparity issues that result from separate image sensors. Specifically, known approaches deal with epipolar geometry alignment by generating a transform that can be applied to the luma and chroma data obtained by the separate image sensors. Known approaches deal with stereo disparity compensation using a software-based approach. The software-based approach extrapolates from the image data obtained by the luma and chroma image sensors. For example, one approach is to perform edge detection in the image data to determine what disparity compensation to apply.

“The approaches described herein involve a paradigm shift from the known software-based approaches. While the art has been directed to improvements in the software-based approaches, such improvements have not altered the fundamental approach. In particular, the extrapolation from the image data employed by the software-based approaches is not deterministic. As such, situations exist in which the software approaches must guess at how to perform stereo disparity compensation.

“For example, in software-based approaches that employ edge detection, an adjustment is made to attempt to compensate for offsets between edges in the chroma image and edges in the luma image. Depending on the image data, such software-based approaches may need to guess as to which way to adjust the edges for alignment. Guesses are needed to deal with any ambiguity in the differences between the luma and chroma image data, such as regarding which edges correspond. The guesses may be made based on certain assumptions, and may introduce artifacts in the composite image obtained from combining the compensated luma and chroma image data.

“The paradigm shift described in this disclosure involves a hardware-based approach. A hardware-based approach as described herein does not involve extrapolation, guessing or making determinations on adjustment based on assumptions. Rather, a hardware-based approach as described herein involves a deterministic calculation for stereo disparity compensation, thus avoiding the problem with ambiguity in the differences between the luma and chroma image data in the known software-based approaches.

“Various embodiments described herein are directed to imaging systems and methods that employ separate luma, chroma and depth/distance sensors (generally referred to herein as three-dimensional (3-D) image sensors). As used herein, the image sensors may be cameras or any other suitable sensors, detectors, or other devices that are capable of obtaining the particular data, namely, luma data, chroma data and 3-D data.

“The systems and methods may involve or include both generating an alignment transform for the image sensors, and using the 3-D data from the 3-D image sensor to process disparity compensation. As used herein, imaging systems and methods should be considered to encompass image sensing, capture processing, rendering and/or generating images.

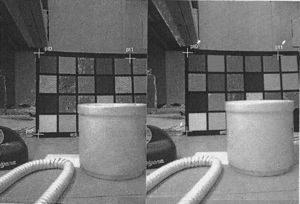

“Various embodiments contemplate generating the alignment transform once to calibrate the imaging system and then using the 3-D data obtained in real time to process disparity compensation in real time (for example, once per frame). The calibration may involve feature point extraction and matching and parameter estimation for the alignment transform. In embodiments, the alignment transform may be an affine transform. In embodiments, the affine transform may be applied on every frame for real-time processing. The real-time processing of disparity compensation may be accomplished by using the real-time 3-D data obtained for each respective frame.

“In particular, some embodiments may take the form of an imaging system. The imaging system may include: a first image sensor configured to obtain luminance data of a scene; a second image sensor configured to obtain chrominance data of the scene; a third image sensor configured to obtain three-dimensional data of the scene; and an image processor configured to receive the luminance data, the chrominance data and the three-dimensional data and to generate a composite image corresponding to the scene from the luminance data, the chrominance data and the three-dimensional data.

“Yet other embodiments may take the form of an imaging method. The imaging method may include: obtaining luminance data of a scene using a first image sensor; obtaining chrominance data of the scene using second image sensor; obtaining three-dimensional data of the scene using a third image sensor; and processing the luminance data, the chrominance data and the three-dimensional data to generate a composite image corresponding to the scene.

“Still other embodiments may take the form of a computer readable storage medium. The computer-readable storage medium may include stored instructions that, when executed by a computer, cause the computer to perform any of the various methods described herein and/or any of the functions of the systems disclosed herein.

“It should be understood that, although the description is set forth in terms of separate luma and chroma sensors, the systems and methods described herein may be applied to any multiple-sensor imaging system or method. For example, a first RGB (red-green-blue) sensor and a second RGB sensor may be employed. Further, the approaches described herein may be applied in systems that employ an array of sensors, such as an array of cameras. As such, it should be understood that the disparity compensation described herein may be extended to systems employing more than two image sensors.”

— Dennis Sellers